Facilitating Document

Annotation using Content and Querying Value

ABSTRACT:

A large number of organizations today generate and share

textual descriptions of their products, services, and actions. Such collections

of textual data contain significant amount of structured information, which

remains buried in the unstructured text. While information extraction

algorithms facilitate the extraction of structured relations, they are often

expensive and inaccurate, especially when operating on top of text that does

not contain any instances of the targeted structured information. We present a

novel alternative approach that facilitates the generation of the structured metadata

by identifying documents that are likely to contain information of interest and

this information is going to be subsequently useful for querying the database.

Our approach relies on the idea that humans are more likely to add the

necessary metadata during creation time, if prompted by the interface; or that

it is much easier for humans (and/or algorithms) to identify the metadata when

such information actually exists in the document, instead of naively prompting

users to fill in forms with information that is not available in the document.

As a major contribution of this paper, we present algorithms that identify structured

attributes that are likely to appear within the document, by jointly utilizing

the content of the text and the query workload. Our experimental evaluation

shows that our approach generates superior results compared to approaches that

rely only on the textual content or only on the query workload, to identify

attributes of interest.

EXISTING SYSTEM:

Many annotation systems allow only “untyped” keyword

annotation: for instance, a user may annotate a weather report using a tag such

as “Storm Category 3”. Annotation strategies that use attribute-value pairs are

generally more expressive, as they can contain more information than untyped

approaches. In such settings, the above information can be entered as (StormCategory,3).

A recent line of work towards using more expressive queries that leverage such

annotations, is the “pay- as-you-go” querying strategy in Dataspaces [2]: In

Dataspaces, users provide data integration hints at query time. The assumption in

such systems is that the data sources already contain structured information

and the problem is to match the query attributes with the source attributes. Many

systems, though, do not even have the basic “attribute-value” annotation that

would make a “pay-as-you go” querying feasible. Annotations that use

“attribute-value” pairs require users to be more principled in their annotation

efforts. Users should know the underlying schema and field types to use; they

should also know when to use each of these fields. With schemas that often have

tens or even hundreds of available fields to fill, this task become complicated

and cumbersome. This results in data entry users ignoring such annotation

capabilities.

DISADVANTAGES

OF EXISTING SYSTEM:

·

The cost is high for creation of

annotation information.

·

The existing system produces some errors

in the suggestions.

PROPOSED SYSTEM:

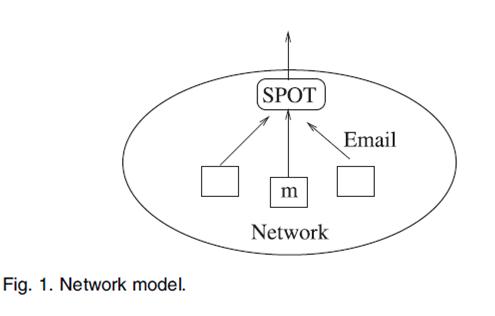

In this paper, we propose CADS (Collaborative

Adaptive Data Sharing platform), which is an “annotate-as-you create” infrastructure

that facilitates fielded data annotation. A key contribution of our system is

the direct use of the query workload to direct the annotation

process, in addition to examining the content of the document. In other words, we

are trying to prioritize the annotation of documents towards generating

attribute values for attributes that are often used by querying users. The

goal of CADS is to encourage and lower the cost of creating nicely annotated

documents that can be immediately useful for commonly issued semi-structured

queries such as the ones. Our key goal is to encourage the annotation of the

documents at creation time, while the creator is still in the “document

generation” phase, even though the techniques can also be used for post generation

document annotation. In our scenario, the author generates a new document and

uploads it to the repository. After the upload, CADS analyzes the text and

creates an adaptive insertion form. The form contains the best attribute names

given the document text and the information need (query workload), and the most

probable attribute values given the document text. The author (creator) can

inspect the form, modify the generated metadata as- necessary, and submit the

annotated document for storage.

ADVANTAGES

OF PROPOSED SYSTEM:

·

We present an adaptive technique for

automatically generating data input forms, for annotating unstructured textual

documents, such that the utilization of the inserted data is maximized, given

the user information needs.

·

We create principled probabilistic

methods and algorithms to seamlessly integrate information from the query

workload into the data annotation process, in order to generate metadata that

are not just relevant to the annotated document, but also useful to the users

querying the database.

·

We present extensive experiments with

real data and real users, showing that our system generates accurate

suggestions that are significantly better than the suggestions from alternative

approaches.

SYSTEM CONFIGURATION:-

HARDWARE CONFIGURATION:-

ü Processor - Pentium –IV

ü Speed - 1.1

Ghz

ü RAM - 256

MB(min)

ü Hard Disk -

20 GB

ü Key Board -

Standard Windows Keyboard

ü Mouse - Two

or Three Button Mouse

ü Monitor - SVGA

SOFTWARE CONFIGURATION:-

ü Operating System : Windows XP

ü Programming Language :

JAVA

ü Java Version :

JDK 1.6 & above.

REFERENCE:

Eduardo J. Ruiz, Vagelis Hristidis and , Panagiotis

G. Ipeirotis “Facilitating Document Annotation using Content and Querying

Value”- IEEE TRANSACTIONS ON KNOWLEDGE

AND DATA ENGINEERING, 2013.